You might be familiar with UX Design, but maybe not with the human-computer interaction field. This academic research field has long been known for closely studying how users handle and control computational interfaces.

What is less known is that the empirical experiments of HCI researchers have largely but quietly inspired the latest and most famous design inventions (no less than the personal computer, software and the mobile interface).

Here are 4 great HCI ideas that have influenced designers to create products that we now know and use every day.

HCI and the birth of Graphical User interface

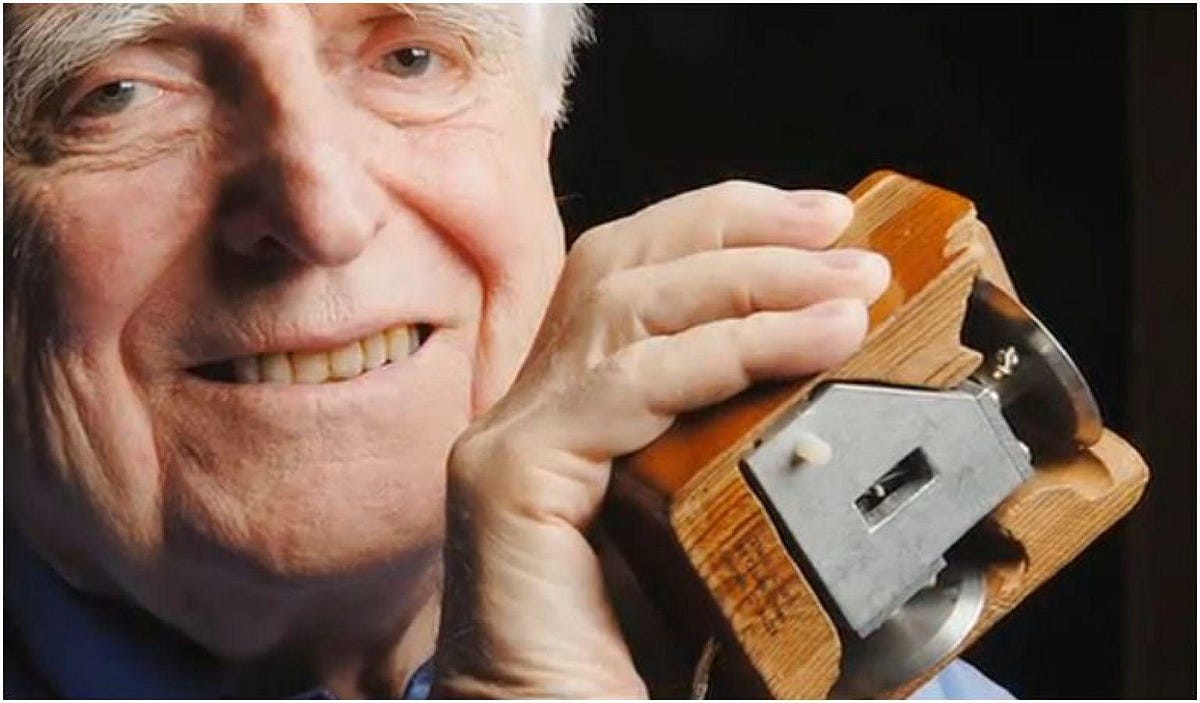

When Ivan Sutherland conceived in 1962 a way to manipulate symbols on an interface with a tool as intuitive as a light pen, he considered that writing-based communication models based were a waste of time for users. Similarly, a year later, as Douglas Engelbart replaced the light pen with a pointer on a surface, what we now call the desktop mouse, he wondered how users could directly interact with a graphical interface.

This primary user experience problem was actually the foundation of the research field around human-machine interaction. To solve it, the HCI researchers agreed on the use of an experimental and empirical method to assess the usability of the first personal computer prototypes. For example, they made a comparative study between different product concepts (the mouse, the laser controller, the knee control and the joystick) to see what was the most effective pointing device. The mouse proved then to be the most flexible and accurate for pointing and selecting an object, although not the fastest (which was the knee control).

Similarly, the invention of the graphical interface and its menu display was informed by quantitative studies analyzing the relative performance of participants when faced with different interfaces (menus by depth or by width). It’s the same period when, as users do no longer make commands by typing but by visual recognition, thanks to the graphical interface, the designer’s work became crucial and essential. And this is why interaction researchers have become to studied very closely the interaction between users and their interface.

All these studies have then gradually attracted the attention of companies and their design teams, who have used these research results and conclusions to guide their workflow.

The Soft Controls Defining Modern Software

Before the emergence of computers, users only handled hard control buttons in their homes to act on their environment. These control levers were visually differentiated by the response they triggered (a button that turned the light on or off, for example).

The invention of “soft control design”, graphic controllers that don’t literally embody their use, asked new questions for the HCI researchers. For example, how to create an icon that does not take up all the space, has a clear indication of its operation and pushes to action?

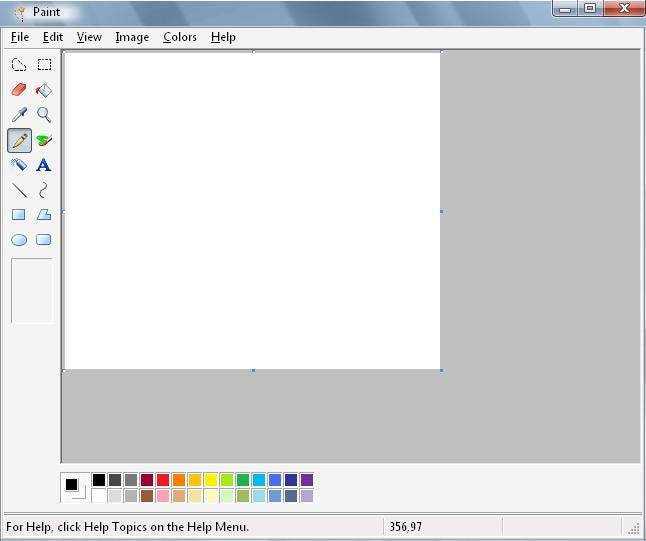

Researchers were particularly interested in how graphical interfaces remind users of familiar hard control levers. Personal computers have especially become filled with graphical objects which tried to metaphorically embody the action they intended to cause. For example, an icon representing a brush (to draw on paint) or a light to indicate the brightness of the screen.

The use of control tools such as a computer mouse are also not systematically congruent with their graphical motion. As users move a mouse towards a vertical axis, the action is reflected in the vertical form. This means users have to adapt to precise spatial transformation. One of the findings of the HCI field to facilitate interaction is to create modes of use, such as the different combinations that the right and left mouse clicks and the keyboard keys allow, in addition to the mouse rotation axis.

Both soft controls and more have defined software design as we know it today.

The Basic Metaphors of Computer Interface

The issue of spatial congruence between device and graphical action define another perspective brought by HCI science : the role of metaphors in the use of graphical interface. While it is easy to guess the function of up and down arrow-shaped elevator buttons, software icons can only have a more abstract meaning, unrelated to natural physical analogies.

More generally, the use of metaphors such as “desktop” or “text file” helps to clarify these functions by relating them to physical tools (a desktop that collects all our files and tools; a file that contains text and can be consulted). And these metaphors are essential to shape the mental models of users, and to forge their expectations and habits when faced with new features.

For example, for a program as classic as Window Paint, the choice of icons is carefully thought out in relation to the suggested function. For example, the magnifying glass naturally allows zooming or the compass intuitively allows moving around the interface.

HCI researchers have shown that the most powerful metaphors trigger such intuitive notions as time and space. For example, clockwise device communicates numbers much faster than the decimal system. Designers have already used it to help blind people find their way around, by receiving directional indications through the numbers on the dial.

The Interaction Researches Behind the iPhone

With all these studies, the field of HCI has naturally inspired the designers behind the most used graphical interface inventions in recent years. HCI researchers have especially seen great opportunities behind interfaces that allow direct interaction, such as touch screen.

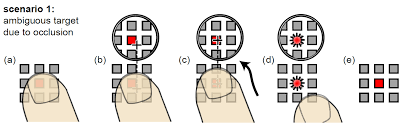

However, to make a touch screen interface possible, they faced the problem of the “fat finger”, or occlusion problem. Because of the constitution of our fingers, we cannot select a pixel-accurate element as with a mouse cursor, and this can make it difficult to select objects. One of the ideas found by the researchers was to use an offset cursor, which appears when the finger touches the screen, allowing to orient it more precisely on a small object.

But two methods of application of this concept were tested in their effectiveness. One consisted in making the selection of the object at the moment when the contact is made with the screen. The other was to block the selection after the screen was touched. The last one finally proved to be more efficient. And this is what the designers of the first iPhone retained, by creating their multitouch technology that has become so important today.

Towards Gaze-Driven Computer Interactions?

HCI finding and influence don’t stop there, and keep inspiring designer’s invention today.

For example, the new trend of accelerometer-based products is derived from the long research on this topic, especially to detect and intuitively manage the screen tilt. Another idea has been to use eye movement to allow users to control objects. While the technology is not yet mature, researchers have already proven that it will be an essential ingredient in the functionality of future products.